Microservices Explained in 10 Minutes

What are microservices? Services for ants?

If you have a Derek Zoolander level working understanding of server systems then don’t worry, we got you covered. Just smile, nod your head occasionally, and “fake it till you make it” – or until this video ends, whatever comes first.

This is microservices explained in 10 minutes

Most people have a fairly rudimentary understanding of how databases and servers work in the back end, and how the information gets to you – the user. 9 out of 10 times a lay person can explain the basics of how data travels, without ever knowing correct terminology or being even remotely intimate with the system.

The process they’d describe is known as monolithic architecture. It’s a tried and proven system, and how the vast majority of server rooms are built and run. But today, we’re talking about microservices – confused yet?

Think of monolithic as the predecessor, and microservices as the new kid on the block. There is a bit of a “passing of the torch” going on, yet the two systems both have their own merits and downfalls that should be considered when choosing which route is right for you.

They both do different things really well, and are not in direct competition – despite outward appearances.

THE DEAL WITH MONOLITHIC

Monolithic architecture can be described as the classical method of service development, “old school” for want of a better word. Think mainframes, clients, and other techno-babble you’ve heard from The Matrix.

It’s a singular, unified system that is a remnant from the days where individual services relied on a single, overwhelmingly powerful mainframe that was usually lurking in some faraway basement. It’s pretty basic, and comprised of 3 main working parts.

There is the database, consisting of many tables that store – you guessed it – data. A server-side application that handles things like HTTP requests, executes domain-specific logic, and retrieves and updates data from the database. It also populates the HTML views to be sent to the third part, the client-side user interface, which is generally a combination of HTML pages and JavaScript running in a web browser or app.

Upgrading this massive brain used vertical scaling, where all you had to do was just keep adding raw computing power and memory resources, CPUs and RAM, to the system as the workload demands increased.

SO, WHAT ARE MICROSERVICES?

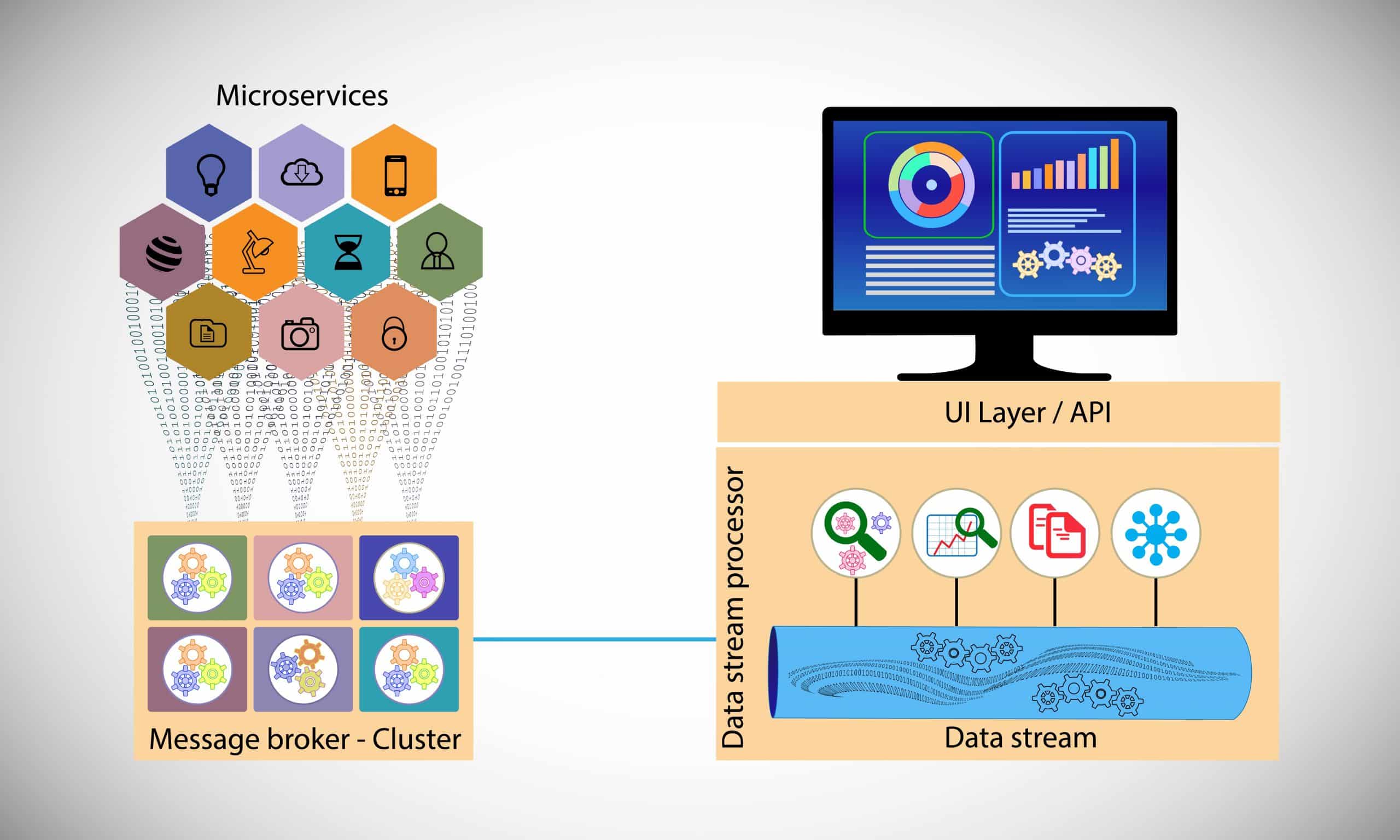

Going the microservices path is a little different – it’s a much newer approach to how we store and access data. Instead of a single mainframe driving all services in a tightly knit system, we have loosely coupled services responsible for discrete tasks, running separately and communicating with each other through simple application programming interfaces on the back end.

There is no big stacked server, but rather several smaller systems dedicated to a small list of functions. Small segments that make up a collective, a larger whole where all the services eventually function as one overall core process – just like a pie cut into many slices.

The same process can be used in more than one business process, or across multiple channels depending on the need. The fact that services are carried out as standardised application programming interfaces means that the service owners are able to change implementations, modify the systems and change the records without any downstream impact. In short, no “server downtime” during any maintenance or upgrades.

MONOLITHIC PROS

So monolithic systems get a bad rap. They’re portrayed as bloated and geriatric systems with more emphasis on stability rather than performance. Despite the reputation, the big plus is simplicity. It’s a single application, so all the logging, rate limiting, and security features like audit trails and DOS protection have no cross-cutting concerns – everything is essentially running together in the one big app.

Then there are the lower setup and operational costs, relative ease when monitoring, logging, and testing the system, and a clear performance advantage. At the end of the day, shared memory access through a mainframe provides faster than inter-process communication speeds.

MONOLITHIC CONS

It’s the old fashioned way to do things, from a time where technology wasn’t nearly as advanced and everyday computing hardware couldn’t hold a candle to a powerful mainframe. Now? Even the multi core CPU on a home PC can do a pretty good job of acting as a rudimentary server.

The other downfall is that the system processes are so tightly coupled into the one application that it becomes incredibly difficult to discern between the services. As the system grows, it becomes harder to pick out independent scaling and code, and any bugs or side effects in the system from changes made become a huge task to isolate, diagnose, and remedy. The system has to be fully de-powered with downtime for any upgrades to be made.

MICROSERVICES PROS

It’s new and shiny? All jokes aside, microservices architecture is anything but micro – the name is a red herring. Though the hardware itself is smaller than the average monolith, separated systems horizontally scaled and each running a small suite of services still take up a fair bit of real estate and don’t lack the power necessary to handle high demands.

As such, they can be organized better as each system has a specific job and is not concerned with doing another job. Each set of services is completely decoupled, making it easier to rebuild or reconfigure to serve the purposes of different applications – fast, independent delivery of individual parts within a larger integrated system.

If a particular service needs to perform better, that particular stack can be upgraded instead of taking the whole system offline to update hardware. One part can be boosted according to your needs, and with no other services chipping in to help, there are less mistakes or errors usually caused by the crossing of system boundaries in a singular monolithic system.

MICROSERVICES CONS

There are 2 main drawbacks in using a microservices based system. The first is that as you build and add new services architecture, cross cutting concerns will occur that perhaps were not anticipated in the design process. There is an additional overhead cost to incorporate separate modules in the collective to deal with each cross-cutting concern, or just run these concerns in another service layer dedicated to routing that traffic.

As you can imagine, the other negative is cost. There are substantially higher operational costs with a microservices system, with frequent testing and maintenance on both hardware and virtual machines to ensure all the moving parts are talking properly.

THE CURRENT TREND

While monolithic architecture does have a reason to live, and is not yet past its expiration date, there is a shift towards using microservices. If the application is small, the build may comprise of just a handful of services, with the option to add on features over time as the need arises. Alternatively, the lack of system downtime during maintenance and upgrades is a big pull for larger companies looking towards efficiency over simplicity.

WHAT’S IT ALL MEAN?

For the time being, both monolithic and microservices architecture have a place in how the back end of a system functions. Although there is the feeling that huge mainframes making way for newer technology is “passing of the old guard”, the reality is that the size of the application and complexity of the services will ultimately dictate which method is chosen. There will likely always be a need to have a simple system for tasks that are too mundane to warrant the overhead of a set segregated, integrated systems functioning as a whole.

THE FUTURE OF MICROSERVICES

It’s clear that the shift towards microservices represents a change in the way architects and developers build solutions. The adoption of microservices applications is increasing at an exponential rate with ResearchAndMarkets projecting the microservices platform segment is set to grow at more than 23.4 percent to reach $1.8 million by 2025.

In fact, tech futurists believe that component architecture and microservices will be so commonplace, the term itself will evaporate as it will represent the norm, not an alternative.

Kofi Group is proud to be a source of knowledge and insight into the startup software engineering world and offers a multitude of resources to help you learn more, improve your career, and help startups hire the best talent. If you are interested in learning more about what we do and how we can help you then get in touch or watch our Youtube videos for additional information.

Try our other blogs on hot topics, like “Why React Native is the Most Popular Cross-Platform Technology” or “The 7 Most Disruptive Software Engineering Trends of 2021”.

Share This Blog

Kofi Group has helped 100+ startups hire software and machine learning engineers. Will fill most of the roles we recruit on with 5 or less candidates presented.

Contact us today to start building your dream team!